Hello World: Quantum Machine Learning with Merlin (Cloud)

Welcome! This notebook demonstrates how to use Merlin to build a quantum reservoir algorithm to then run on a real QPU (or in this case, a simulator emulating it). We chose a reservoir algorithm since gradient is not propagated through quantum layers when using a processor.

To use the processor, follow the TODO comments.

1. Install and Import Dependencies

pip install merlinquantum in your terminal or !pip install merlinquantum in your notebook.[1]:

import torch

import torch.nn as nn

import merlin as ML

import perceval as pcvl

from merlin.datasets import iris

To run your experiments on a processor (real QPU or a simulator emulating it), we need to create a MerlinProcessor object.

[2]:

# (OPTIONAL) Save your token in a file called .env in the same directory as this notebook, with the following content:

# CLOUD_TOKEN=your_token_here

## TODO Uncomment to load the token

# from dotenv import load_dotenv

# load_dotenv()

# import os

# CLOUD_TOKEN = os.getenv("CLOUD_TOKEN")

Lets first load a perceval RemoteProcessor. It is the original way of accessing Quandela’s cloud. For Scaleway hosted plateforms and any future session-based providers, use pcvl.providers.scaleway instead of pcvl.RemoteProcessor.

Here we use sim:slos which is a noise-free simulator, use sim:belenos if you want a simultor which reproduces the noise of the qpu:ascella QPU.

[3]:

## TODO Uncomment to use the processor

# pcvl.RemoteConfig.set_token(CLOUD_TOKEN)

# rp = pcvl.RemoteProcessor("sim:slos")

[4]:

## TODO Uncomment to use the processor

# proc = ML.MerlinProcessor(

# rp,

# microbatch_size=32, # batch chunk size per cloud call

# timeout=3600.0, # default wall-time per forward (seconds)

# max_shots_per_call=None, # optional cap per cloud call (see below)

# chunk_concurrency=1, # parallel chunk jobs within a quantum leaf

# )

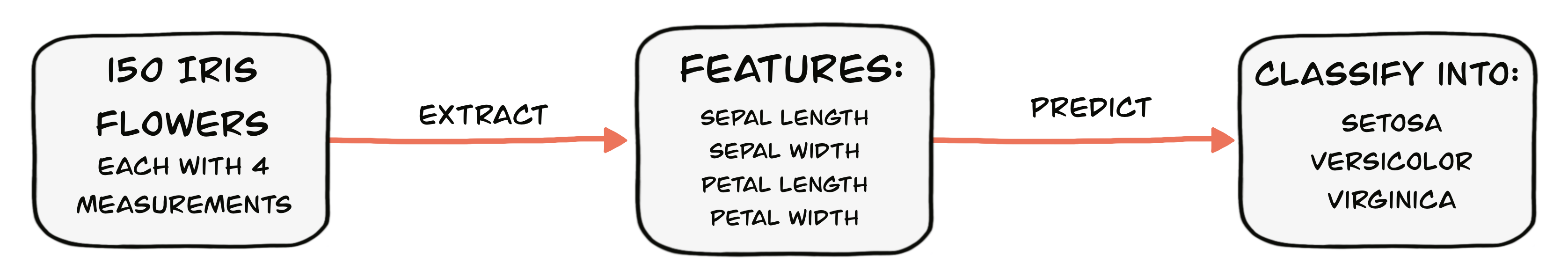

2. Load and Prepare the Iris Dataset

[5]:

train_features, train_labels, train_metadata = iris.get_data_train()

test_features, test_labels, test_metadata = iris.get_data_test()

# Convert data to PyTorch tensors

X_train = torch.FloatTensor(train_features)

y_train = torch.LongTensor(train_labels)

X_test = torch.FloatTensor(test_features)

y_test = torch.LongTensor(test_labels)

print(f"Training samples: {X_train.shape[0]}")

print(f"Test samples: {X_test.shape[0]}")

print(f"Features: {X_train.shape[1]}")

print(f"Classes: {len(torch.unique(y_train))}")

Training samples: 120

Test samples: 30

Features: 4

Classes: 3

3. Define the Quantum reservoir Model

The model can be split into two parts:

The

QuantumLayerimplements the quantum reservoir.The

classical_outis the classical model that takes the reservoir’s output to classify the data.

To make sure that the model runs on the processor, we will need to call the processor’s forward method. We will also need to redefine the parameters method so that only the classical parameters are changed and not the reservoir’s (MerLin’s simple quantum layer creates trainable pytorch parameter by default).

[6]:

class HybridIrisClassifier(nn.Module):

"""

Hybrid model for Iris classification:

- Quantum reservoir processes the 4 features

- Classical output layer for 3-class classification

"""

def __init__(self):

super(HybridIrisClassifier, self).__init__()

# Quantum layer: processes the 4 features

self.quantum = ML.QuantumLayer.simple(

input_size=4,

).eval()

# Classical output layer: quantum → 8 → 3

self.classical_out = nn.Sequential(

nn.Linear(self.quantum.output_size, 8),

nn.ReLU(),

nn.Dropout(0.1),

nn.Linear(8, 3),

)

self.params = self.classical_out.parameters()

def forward(self, x):

# TODO Use the commented return if you want to use the reservoir algorithm with the processor.

# return self.classical_out(proc.forward(self.quantum.eval(),x))

return self.classical_out(self.quantum.eval()(x))

def parameters(self):

return self.params

This could be ran easily with the processor, but it takes a lot of time. Since the reservoir always return the same output (it is not trained and receives the same input), we can calculate all of the outputs of the reservoir and then reuse them. The next two code cells implement the same reservoir as earlier but in a more resource-efficient way.

Lets calculate all of the reservoir outputs and add them in a dictionary.

[7]:

reservoir = ML.QuantumLayer.simple(

input_size=4,

).eval()

output_size = reservoir.output_size

## TODO Uncomment to use the processor

# train_outputs= proc.forward(reservoir,X_train)

# train_reservoir_map={tuple(x.tolist()):output for x,output in zip(X_train,train_outputs)}

# test_outputs=proc.forward(reservoir,X_test)

# test_reservoir_map={tuple(x.tolist()):output for x,output in zip(X_test,test_outputs)}

## TODO Comment to use the processor

reservoir.eval()

with torch.no_grad():

train_outputs = reservoir(X_train)

train_reservoir_map = {

tuple(x.tolist()): output for x, output in zip(X_train, train_outputs)

}

test_outputs = reservoir(X_test)

test_reservoir_map = {

tuple(x.tolist()): output for x, output in zip(X_test, test_outputs)

}

reservoir_map = {**train_reservoir_map, **test_reservoir_map}

[8]:

for i, (key, value) in enumerate(reservoir_map.items()):

if (i + 1) % 10 == 0:

print(f"{key}: {value}")

(0.4166666567325592, 0.2916666567325592, 0.49152541160583496, 0.4583333432674408): tensor([0.1162, 0.0151, 0.1026, 0.0622, 0.0703, 0.2883, 0.0501, 0.2292, 0.0571,

0.0089])

(0.02777777798473835, 0.4166666567325592, 0.050847455859184265, 0.0416666679084301): tensor([0.0642, 0.0064, 0.1750, 0.0728, 0.1062, 0.2058, 0.0913, 0.1921, 0.0747,

0.0115])

(0.0555555559694767, 0.125, 0.050847455859184265, 0.0833333358168602): tensor([0.0554, 0.0051, 0.1468, 0.0877, 0.1154, 0.2244, 0.0828, 0.2132, 0.0641,

0.0050])

(0.5833333134651184, 0.5, 0.5932203531265259, 0.5833333134651184): tensor([0.1190, 0.0214, 0.1024, 0.0502, 0.0673, 0.2867, 0.0374, 0.2349, 0.0620,

0.0187])

(0.1944444477558136, 0.625, 0.10169491171836853, 0.2083333283662796): tensor([0.0697, 0.0107, 0.1787, 0.0663, 0.0810, 0.2125, 0.0709, 0.2097, 0.0925,

0.0080])

(0.7222222089767456, 0.4583333432674408, 0.7457627058029175, 0.8333333134651184): tensor([0.1463, 0.0251, 0.0637, 0.0452, 0.0772, 0.2976, 0.0304, 0.2188, 0.0660,

0.0298])

(0.6388888955116272, 0.4166666567325592, 0.5762711763381958, 0.5416666865348816): tensor([0.1115, 0.0251, 0.1035, 0.0477, 0.0713, 0.2746, 0.0346, 0.2506, 0.0626,

0.0185])

(0.4722222089767456, 0.5833333134651184, 0.5932203531265259, 0.625): tensor([0.1298, 0.0162, 0.1002, 0.0562, 0.0610, 0.3019, 0.0434, 0.2142, 0.0607,

0.0165])

(0.7222222089767456, 0.5, 0.7966101765632629, 0.9166666865348816): tensor([0.1569, 0.0251, 0.0551, 0.0448, 0.0803, 0.3033, 0.0313, 0.2039, 0.0650,

0.0342])

(0.5277777910232544, 0.0833333358168602, 0.5932203531265259, 0.5833333134651184): tensor([0.1439, 0.0212, 0.0671, 0.0569, 0.0786, 0.2901, 0.0421, 0.2247, 0.0622,

0.0132])

(0.3888888955116272, 0.375, 0.5423728823661804, 0.5): tensor([0.1254, 0.0146, 0.1003, 0.0617, 0.0687, 0.2987, 0.0521, 0.2135, 0.0542,

0.0108])

(0.5555555820465088, 0.2083333283662796, 0.6610169410705566, 0.5833333134651184): tensor([0.1431, 0.0240, 0.0737, 0.0514, 0.0845, 0.2946, 0.0416, 0.2144, 0.0554,

0.0173])

(0.7222222089767456, 0.4583333432674408, 0.694915235042572, 0.9166666865348816): tensor([0.1464, 0.0210, 0.0575, 0.0501, 0.0646, 0.2976, 0.0293, 0.2286, 0.0753,

0.0295])

(0.25, 0.5833333134651184, 0.06779661029577255, 0.0416666679084301): tensor([0.0572, 0.0220, 0.1986, 0.0535, 0.1069, 0.1720, 0.0760, 0.2125, 0.0884,

0.0131])

We can easily define the trainable classical model using this map.

[9]:

class HybridIrisClassifier(nn.Module):

"""

Hybrid model for Iris classification:

- Quantum reservoir processes the 4 features

- Classical output layer for 3-class classification

"""

def __init__(self, output_size: int = 1):

super(HybridIrisClassifier, self).__init__()

self.output_size = output_size

# Classical output layer: quantum → 8 → 3

self.model = nn.Sequential(

nn.Linear(output_size, 8), nn.ReLU(), nn.Dropout(0.1), nn.Linear(8, 3)

)

def forward(self, x: torch.Tensor):

if x.dim() == 1:

x.unsqueeze(0)

input_to_classical = torch.empty(x.shape[0], self.output_size)

for i, input in enumerate(x):

input_to_classical[i] = reservoir_map[tuple(input.tolist())]

return self.model(input_to_classical)

4. Set the Training Parameters

You can adjust these parameters to see how they affect training and model performance.

[10]:

learning_rate = 0.01

number_of_epochs = 200

5. Train the Hybrid Model

Lets train the model like a normal pytorch module.

[11]:

import random, numpy as np, torch

def reset_seeds(s=0):

random.seed(s)

np.random.seed(s)

torch.manual_seed(s)

torch.cuda.manual_seed_all(s)

torch.backends.cudnn.deterministic = True

torch.backends.cudnn.benchmark = False

reset_seeds(123)

model = HybridIrisClassifier(output_size=output_size)

optimizer = torch.optim.Adam(model.parameters(), lr=learning_rate)

criterion = nn.CrossEntropyLoss()

model.train()

# Training loop

for epoch in range(number_of_epochs):

optimizer.zero_grad()

loss = criterion(model(X_train), y_train)

loss.backward()

optimizer.step()

model.eval()

with torch.no_grad():

preds = model(X_test).argmax(dim=1)

accuracy = (preds == y_test).float().mean().item()

model.train()

if (epoch + 1) % 20 == 0:

print(

f"Epoch {epoch + 1} had a loss of {loss.item()} and a test accuracy of {accuracy}"

)

Epoch 20 had a loss of 1.0754386186599731 and a test accuracy of 0.20000000298023224

Epoch 40 had a loss of 1.0080885887145996 and a test accuracy of 0.6666666865348816

Epoch 60 had a loss of 0.8952571749687195 and a test accuracy of 0.8333333134651184

Epoch 80 had a loss of 0.7466301321983337 and a test accuracy of 0.8666666746139526

Epoch 100 had a loss of 0.6050937175750732 and a test accuracy of 0.8999999761581421

Epoch 120 had a loss of 0.4987705945968628 and a test accuracy of 0.8999999761581421

Epoch 140 had a loss of 0.4063666760921478 and a test accuracy of 0.9333333373069763

Epoch 160 had a loss of 0.4019034206867218 and a test accuracy of 0.9333333373069763

Epoch 180 had a loss of 0.3282301127910614 and a test accuracy of 0.9333333373069763

Epoch 200 had a loss of 0.3218698501586914 and a test accuracy of 0.9333333373069763

6. Evaluate the Model

After training, let’s evaluate our model on the test set and print the accuracy.

[12]:

# Evaluate on test set

model.eval()

with torch.no_grad():

test_outputs = model(X_test)

predictions = torch.argmax(test_outputs, dim=1)

accuracy = (predictions == y_test).float().mean().item()

print(f"Test accuracy: {accuracy:.4f}")

Test accuracy: 0.9333

[13]:

number_of_runs = 10

accuracies = []

for i in range(number_of_runs):

reset_seeds(i + 123)

model = HybridIrisClassifier(output_size=output_size)

optimizer = torch.optim.Adam(model.parameters(), lr=learning_rate)

criterion = nn.CrossEntropyLoss()

# Training loop

model.train()

for epoch in range(number_of_epochs):

optimizer.zero_grad()

loss = criterion(model(X_train), y_train)

loss.backward()

optimizer.step()

model.eval()

with torch.no_grad():

preds = model(X_test).argmax(dim=1)

accuracy = (preds == y_test).float().mean().item()

model.train()

# print(f"Epoch {epoch+1} had a loss of {loss.item()} and a test accuracy of {accuracy}")

# Final evaluation of the model

model.eval()

with torch.no_grad():

preds = model(X_test).argmax(dim=1)

accuracies.append((preds == y_test).float().mean().item())

avg = torch.tensor(accuracies).mean().item()

std = torch.tensor(accuracies).std(unbiased=True).item()

print(f"Average accuracy: {avg:.4f} ± {std:.4f}")

Average accuracy: 0.9633 ± 0.0189

Conclusion

Even though MerLin is built as a simulation-first package, it is still possible to run and optimize quantum layers with the processor. Although, a gradient-free optimizer such as COBYLA should be used.

Also, if you want a better performing reservoir based on the litterature, you can try to reproduce the Quantum optical reservoir computing powered by boson sampling’s resevoir. It is good exercice to familiarize yourself with MerLin and learn about PCA if you are not from a machine learning background.